Ying-Huey Fua

yingfua@cs.wpi.edu

Ying-Huey Fua

yingfua@cs.wpi.edu

Technologies have been advancing in leaps and bounds in the past decade making ideas and dreams which seem impossible decades ago become realizable. Telecollaboration, video conferencing systems or shared immersive environments are among the many applications that have surfaced due to improvements in technology. Such systems are aimed at making interpersonal communication more effective. However, users are often required to don head-mounted displays in a shared immersive virtual environment. The head wear is not only cumbersome but may also limit the mobility of users.

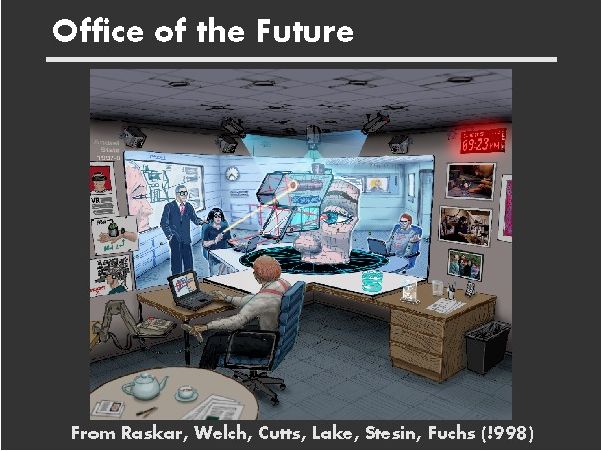

The Office of the Future is a system proposed by a group of researchers in the University of North Carolina, Chapel Hill. Its aim is to build a compelling and useful system for shared telepresence and telecollaboration between distant individuals. Similar to CAVE, a well known shared immersive application, users are not required to wear cumbersome head-mounted display but are instead surrounded with a shared immersive display (SID).

Their goals are to built an office like interface with a virtual room that seems like an extension to the real room where participants can move freely while their locations are being tracked. Moreover, they want to be able to build this capability of a life-like shared room experience into existing offices rather than building a specialized telecollaboration systems that resemble offices ( See Figure 1). They used camera-projector pairs that are mounted on the ceiling to control the lightings and capture the on-goings in the room. Display surfaces are simply white foam core boards that are mounted on the walls.

The three basic components to their approach are:

This module captures continually and in real-time, image based models of the office environment including all of the designated display surfaces. They used a video camera and projector pair for depth extraction. Basically, the camera looks at a set of n-successive images, creates binary images using adaptive thresholding on a per-pixel basis and generates an n-bit code for every pixel corresponding to the code for the vertical bar that was imaged at the pixel. A precomputed sparse triangulation lookup table which is based on calibration data is then used for trilinear interpolation to compute the intersection of pseudo-camera rays and the projected vertical planes. The color information about the surfaces are obtained by using the projector as a bright light source along with a synchronized camera. This color information together with the 3D coordinates of the surface points imaged at each pixel obtained during depth extraction completes the image-based model.

Rendering and Display Modules

Their aim is to generate images that appear correct to an observer when projected onto potentially irregular surfaces. A magnetic head tracking device is used to determine the viewer's location. The input to their two pass approach are:

In the second pass, the texture obtained from the first pass is projected from the user's viewpoint onto the polygonal model of the display surface. The display surface is then rendered from the projector's viewpoint. This resulting image, when displayed by the projector, produce the desired image for the viewer. As the user moves, the desired image changes and is projected from the viewer's new location.

Limitations

The Office of the Future presents initial results of a semi-immersive display in an office-like environment. Perhaps in the future, we will not need to cramp all our information onto a small monitor, but could make use of the effective space around us as display spaces. Also, we may no longer need to travel to offices located elsewhere in the world to communicate with our peers since communication may be available via immersive environments! On the other hand, for such systems to be really effective and useful in our daily life, much more research and usability tests need to be conducted.

Reference

Materials from this write-up is obtained from the following paper:

The Office of the Future: A Unified Approach to Image-Based Modeling

and Spatially Immersive Displays

R. Raskar, G. Welsh, M. Cutts, A. Lake, L. Stesin and H. Fuchs.

Proc. of Siggraph 98, p. 179-188.